Overview

Our research and development activities concentrate on the design and validation of Adaptive, Real-Time Learning Systems and Embedded Systems Engineering solutions for education. We integrate intelligent software architectures, learning analytics, and hardware-enabled instructional technologies to develop responsive, data-driven learning environments. Through rigorous research methodology and prototype validation, we advance innovative systems that enhance personalisation, engagement, and measurable learning outcomes.

The Central Problem: One-Size-Fits-All.

Traditional digital education often uses a one-size-fits-all approach that ignores differences in students’ learning styles, prior knowledge, and pace, which leads to frustration or disengagement. Standardised assessments often fail to capture individual progress, which can restrict meaningful feedback and tailored support, ultimately leading to lower student engagement and academic performance

Our R&D Strategy

We place learners’ socio-emotional needs at the forefront, integrating them with data-driven algorithms to create adaptive learning experiences that modify content, difficulty, and assessments in real time. Through personalised learning pathways, ongoing feedback, and dynamic content adjustments, our R&D policy ensures students are consistently challenged, engaged, and supported—enhancing learning outcomes and helping to reduce achievement gaps.

Research Areas

- Immediate feedback mechanisms

- Dynamic assessment

- Algorithmic personalisation

Including…

- Intelligent transcription-translation (real-time speech-to-text with integrated multilingual translation)

- Context-Aware Simplification (adjusting lexical and syntactic complexity)

- Semantic scaffolding (explanatory glosses, definitions, rephrasing)

- Multilingual support, and culturally responsive language adaptation.

Research Emphasis: Improving usability, trust, and learning efficiency through evidence-based interface design and interaction models.

Core Areas Include:

- User experience (UX) design for learning enviroments

- Cognitive load optimisation

- Affective interaction design (emotion-aware systems)

Methodology & Approach

Our research approach combines literature review, system design, iterative prototyping, and formative evaluation in real or simulated learning contexts. Emphasis is placed on continuous refinement based on learner data and feedback.

Research & Innovation Portfolio

Adaptive Language Mediation System- Lingualive (Cloud-Based)

Adaptive Language Mediation System- Lingualive (Cloud-Based)

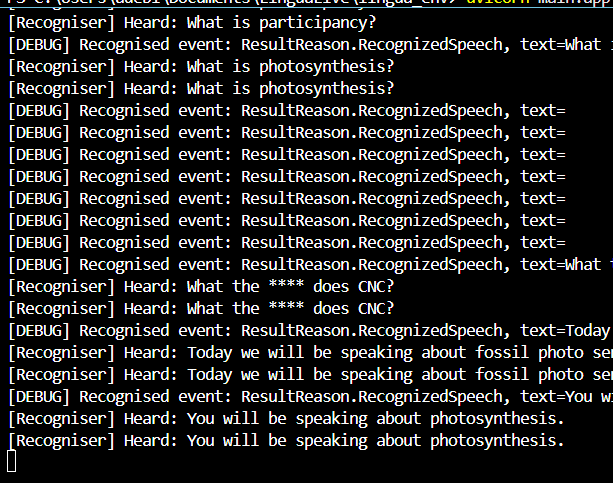

Developed & upscaling a cloud-based speech transcription-translated system for a multilingual student population and learners with hearing difficulty.

👇See Abstract & Problem statement

Language barriers remain one of the significant challenges to equity in education in the increasingly internationalised educational environments. Students who are not proficient in the instructional language often experience knowledge gaps, high cognitive load, reduced comprehension, and limited participation. The project aims to design and implement a real-time speech transcription and translation system that converts instructors’ spoken discourse into translated text aligned with individual learners’ language preferences. The system is intended for deployment in classrooms, lecture halls, and digital learning environments to support multilingual student populations and enhance accessibility for learners with hearing impairments through immediate text-based mediation.

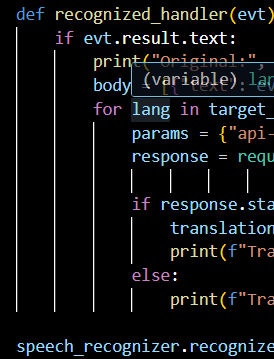

Azure Cognitive Services handles the transcription-translation inferences, while the system development for the backend, and a user-friendly frontend interface for the student-client to access the system are built using the Python programming language and JavaScript, respectively. The project will produce deployable prototypes of an adaptive language mediation system suitable for classroom use, able to effectively support student comprehension, reduce cognitive load, and learning disparities in multilingual learning environments, hence promoting inclusive education. Practical guidelines will also be provided to interested educational institutions on how to integrate the real-time language mediation system into their learning ecosystems.

Keywords:- Language barriers, AI-based language support, Speech recognition, Inclusive education, Higher education, Language mediation.

Problem statement: International students—including exchange and Erasmus participants who are learning in a language different from their first often face learning disadvantages such as high cognitive load, covert knowledge gaps, reduced academic performance, and deprivation of classroom participation because they struggle to fully understand the language of instruction. Although current technologies for language mediation have advanced significantly, effective classroom applications are still limited, particularly in terms of performance, contextual accuracy, glossary simplification, and the level of linguistic personalisation required by individual learners. Even when students have conversational proficiency, learning in an unfamiliar language can create serious challenges.

Methodology

- System Design and Architecture: Design a modular architecture integrating automatic speech recognition (ASR), neural machine translation (NMT), and a responsive user interface. Define system requirements based on multilingual classroom use cases and accessibility standards.

- Prototype Development: Implement a functional prototype that uses real-time speech processing and cloud-based or local translation APIs. Integrate user interface components for language selection, transcript display, and note-taking.

- Pilot Deployment: Deploy the system in a controlled classroom or digital learning environment. Collect live speech data to evaluate transcription accuracy, translation quality, and latency.

- Evaluation and Validation: Assess system performance using quantitative metrics (e.g., Word Error Rate, translation accuracy, response time). Gather qualitative feedback from students and instructors regarding usability, comprehension support, and accessibility impact.

- Iterative Refinement: Refine the system based on technical performance data and user feedback. Optimize linguistic accuracy, interface usability, and pedagogical alignment

Expected Outcomes & Impact

- Functional Real-Time Language Mediation System: A validated prototype capable of delivering accurate, low-latency speech transcription and translation across multilingual learning environments.

- Improved Comprehension and Inclusion: Enhanced learner understanding through immediate language support, particularly for multilingual students and learners with hearing impairments.

- Increased Learner Autonomy and Engagement: Greater control over language preferences and learning pace, fostering active participation and self-regulated learning.

- Measurable Accessibility Impact: Demonstrable improvements in accessibility metrics, comprehension performance, and user satisfaction based on pilot evaluation data.

- Scalable Educational Technology Framework: A deployable model adaptable to diverse institutional contexts, supporting inclusive and technology-mediated pedagogy.

Status – Ongoing

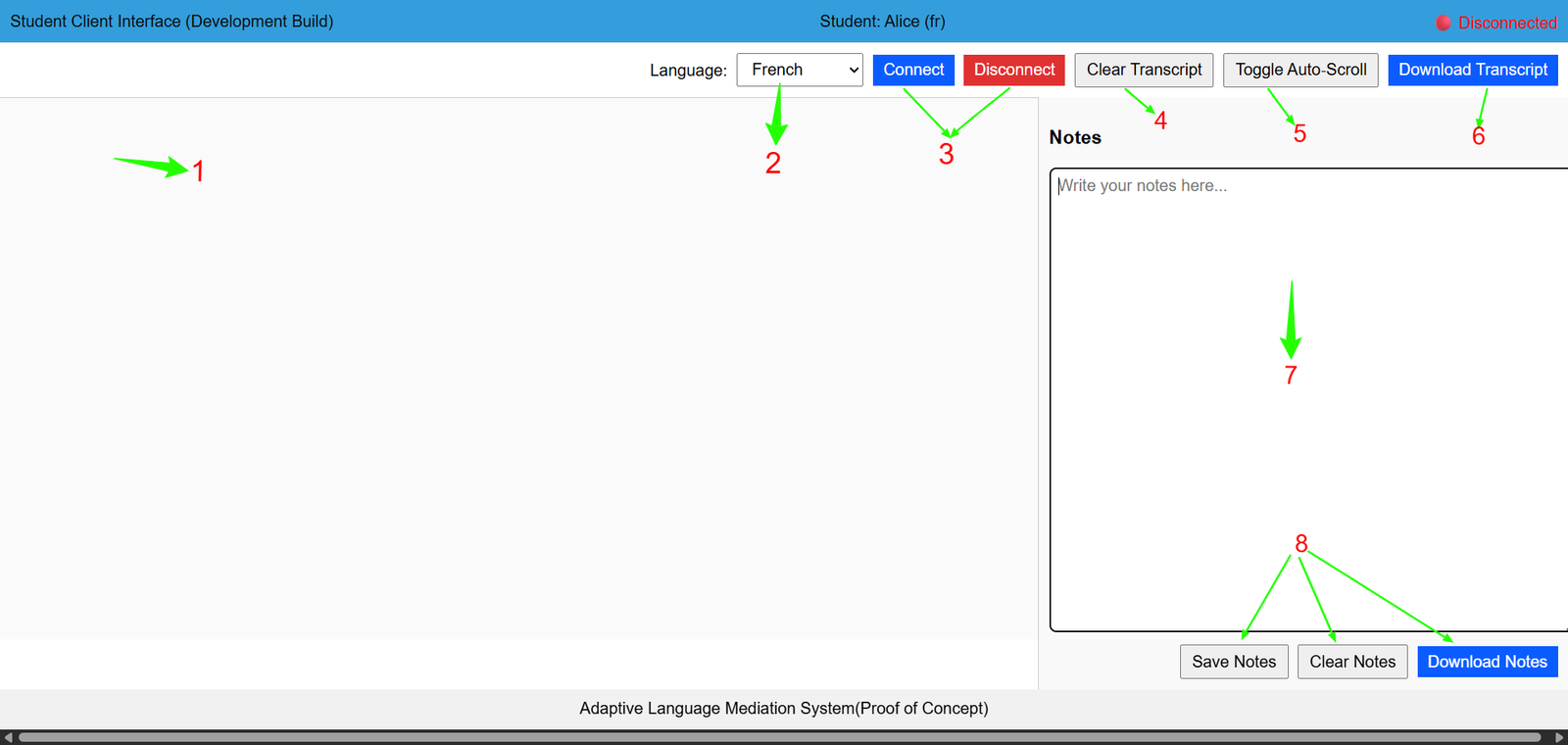

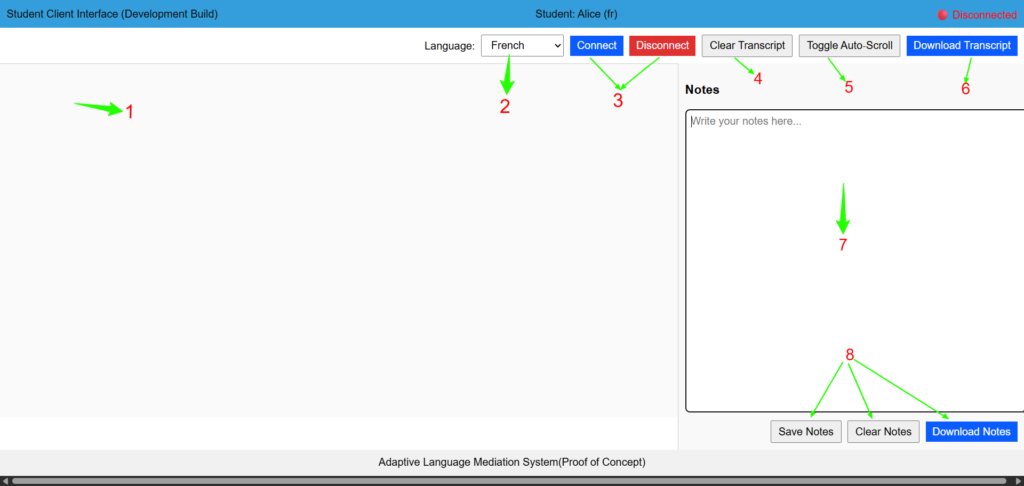

The User Interface (UI) for Lingualive

Technical and pedagogically oriented descriptions of the embedded features

(1)Main Transcription-Translation View

Displays real-time speech-to-text output alongside machine-mediated translation. Supports multimodal input processing and immediate semantic mapping across languages. Enhances comprehension monitoring, cross-linguistic comparison, and metalinguistic awareness.

(2)Language Drop-Down Menu

Allows dynamic selection of source and/or target language preferences. Enables adaptive linguistic mediation based on learner proficiency levels.

Supports differentiated instruction and personalised multilingual scaffolding.

(3)Connect/Disconnect Buttons(Green/Red Indicators)

Control activation of live speech recognition and translation services. Provide real-time system-state feedback through colour-coded status indicators.

Promote learner agency, session regulation, and technical reliability awareness

(4)Clear Transcript Button

Resets the transcription display without affecting system configuration. Facilitates segmented learning cycles and focused discourse analysis. Supports iterative reflection and task-based instructional design.

(5)Toggle Auto-Scroll Button

Enables or disables automatic progression of live transcribed content. Supports cognitive load management during high-volume discourse input.

Allows learners to regulate pacing and reprocess linguistic input

(6)Download Transcript

Exports recorded multilingual interaction data in a structured format. Supports post-session analysis, corpus-based reflection, and assessment. Encourages evidence-based learning and longitudinal language tracking.

(7)Note-Taking Pane

Provides a parallel workspace for structured annotation and synthesis. Encourages active processing, semantic linking, and cognitive rehearsal.

Bridges real-time input with reflective and analytical learning strategies.

(8)Save, Clear, and Download Notes Buttons

Manage user-generated annotations within the learning environment. Enable structured metacognitive documentation and revision control. Support reflective practice and knowledge consolidation processes.

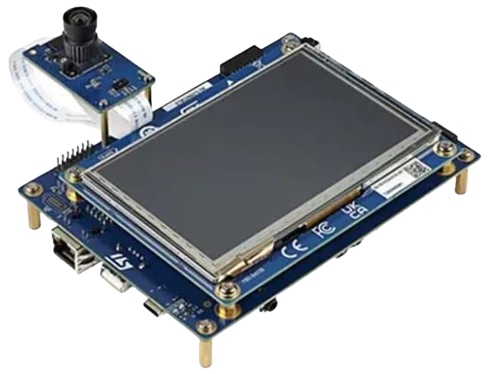

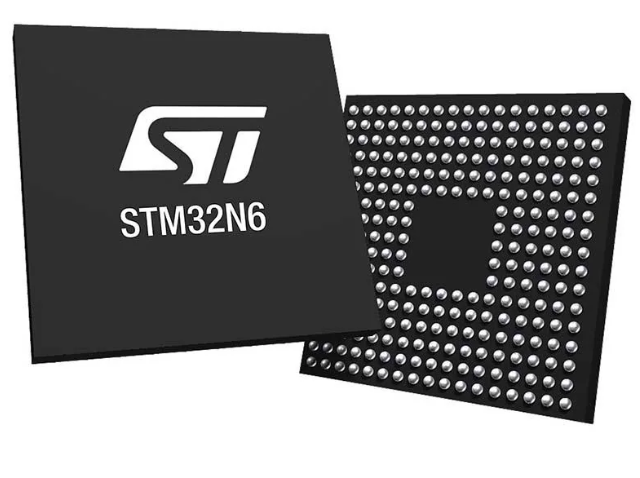

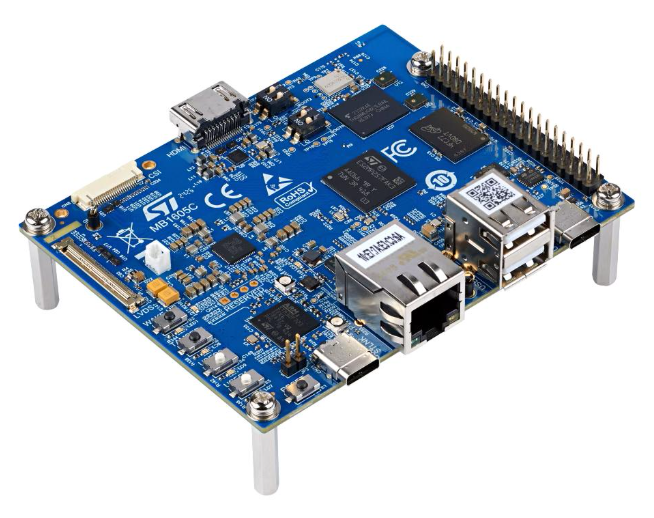

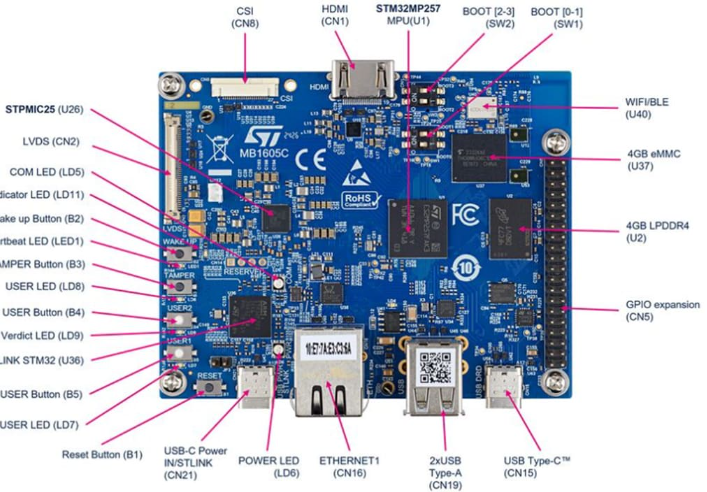

Socially-Aware Affective Computing for Adaptive Instructional Strategy (An STM32N6-series Microcontroller-based project).

Socially-Aware Affective Computing for Adaptive Instructional Strategy (An STM32N6-series Microcontroller-based project).

Investigating learners’ emotional and social competencies, using the sensor-captured multimodal data in training models for dynamically adjusting learning content and difficulty levels based on learner emotional responses, performance and pace.

👇See Abstract and Problem statement

Abstract

Affective and social dimensions significantly influence learning effectiveness, yet they remain under-integrated in higher education instructional design. Existing strategies largely prioritise cognitive performance metrics, relying on intuition and standardised frameworks rather than affective data analytics. This project proposes a socially-aware affective computing system implemented on an STM32N6-series microcontroller to capture, process, and interpret learners’ emotional states and social interaction patterns in real time. The system leverages these insights to inform adaptive instructional strategies that enhance personalisation, engagement, and inclusive digital learning environments in higher education. This is an integral part of the global efforts on inclusive and quality education in support of UN SDG 4.

Keywords: Learning design, HCI-Education, Affective computing, Embedded systems, Social interactions.

Problem statement: As pedagogical activities are increasingly digitalised and automated, the effective implementation of socio-affective development of learners in the instructional strategies in higher education is being challenged and underrepresented. As we continue to rely on digital tools, the gap between the need for socio-emotional learning and its representation in learning resources continues to grow. Additionally, the ubiquitous traditional e-learning platforms, which often offer static content, frequently fail to engage diverse learners, leaving them underchallenged or overwhelmed by not addressing their individual needs. These are emerging problems in digital educational research.

Methodology

- System Architecture Design: Develop an embedded affective computing framework using the STM32N6 microcontroller integrated with multimodal sensors (e.g., facial expression, voice tone, or interaction data). Define affective and social indicators relevant to instructional adaptation.

- Data Acquisition and Modelling: Collect real-time emotional and social interaction data during learning sessions. Apply lightweight machine learning models optimised for edge processing to classify affective states and social engagement levels.

- Adaptive Instructional Engine Development: Design rule-based or ML-driven adaptive mechanisms that adjust instructional strategies (e.g., pacing, feedback type, content difficulty) based on detected states.

- Pilot Testing and Evaluation: Deploy the system in a controlled higher education setting. Evaluate accuracy of affective detection, system responsiveness, and impact on engagement and learning outcomes through quantitative and qualitative measures.

Expected Outcomes & Impact

- Embedded Affective Detection Prototype: A functional STM32N6-based system capable of detecting and classifying learners’ affective and social states in real time.

- Adaptive Instructional Response Mechanism: An operational model that dynamically adjusts instructional variables (e.g., pacing, feedback, content difficulty) based on affective data.

- Improved Engagement Indicator: Observable increases in learner participation, sustained attention, and interaction quality during pilot implementation.

- Empirical Validation Data: Quantitative and qualitative evidence demonstrating the feasibility, accuracy, and pedagogical relevance of socially-aware adaptive instruction.

Status– Ongoing

Project Hardware and Tools Overview

Updates & Progress

Check for updates and progress reports on our current and concluded, In-concept research projects.

Collaboration and Partnership

We welcome collaboration with researchers, educational institutions, and organisations interested in advancing adaptive learning and educational innovation.

Contact us to explore research collaboration or pilot studies.